SEO in 2026 rewards sites that prove value fast and clearly. Search engines now weigh page experience, topical coverage, and trust signals more tightly, while AI-generated results change how people click and compare sources. At the same time, core basics still drive rankings: clean technical setup, useful content that answers specific queries, and links earned from credible sites. This guide reviews what has shifted, what still works, and where to focus effort.

Key takeaways

- AI Overviews reduce clicks, so pages must answer queries clearly and early.

- Relevance, crawlability, and verified quality signals still drive durable rankings.

- Build topical coverage with original data and first-hand, traceable evidence.

- Prioritise clean indexing, Core Web Vitals targets, and valid structured data.

- Measure SEO with GA4 outcomes, Search Console queries, and controlled testing.

How Google’s 2026 SERPs and AI Overviews Changed Click Behaviour

Google’s 2026 results pages change click behaviour by answering more queries directly on the page and pushing organic links lower. Google Search uses AI Overviews to summarise information and cite sources, which reduces the need to open multiple tabs for basic definitions and comparisons.

AI Overviews work by extracting and synthesising content from indexed pages, then presenting a short response above or within the main results. This layout competes with classic “10 blue links” for attention because users can scan an answer, refine the query, or click a cited source without scrolling. Rich results such as product data, reviews, and “People also ask” boxes also take more space, which changes which positions earn the most clicks.

For publishers, the practical implication is that ranking alone does not guarantee traffic. Pages need to match the query intent tightly, answer the key question early, and support claims with clear evidence that Google can quote. Strong on-page structure helps extraction: descriptive headings, short definitions, and consistent terminology improve how systems interpret the page. Brand trust also matters more because users choose fewer clicks; clear authorship, transparent sourcing, and easy-to-verify facts increase the chance of being selected as a cited result.

What Still Drives Rankings: Relevance, Crawlability, and Quality Signals

SEO in 2026 rewards the same fundamentals as earlier years, but the weighting has shifted. Option A focuses on chasing new formats and short-lived tactics. Option B builds pages that match intent, load reliably, and earn trust signals. The second approach still produces durable rankings because it aligns with how Google Search works: crawl, index, then rank based on relevance and quality.

| Driver | What still works | What fails more often in 2026 |

|---|---|---|

| Relevance | Clear topic focus, strong on-page headings, and direct answers to the query. | Broad pages that target many intents with thin coverage. |

| Crawlability | Clean internal linking, indexable templates, and fast server responses. | JavaScript-heavy navigation, orphan pages, and blocked resources. |

| Quality signals | Original analysis, accurate sourcing, and visible author and business details. | Rewritten content, unclear ownership, and weak editorial standards. |

Key differences come down to verification and consistency. Google can crawl more, but it still depends on accessible URLs, sensible canonicals, and stable status codes. Quality evaluation also leans harder on evidence: cite primary sources, keep facts current, and show who is responsible for the content.

- Audit indexation monthly in Google Search Console and fix coverage issues before scaling content.

- Strengthen internal links to your highest-value pages using descriptive anchor text.

- Publish content that adds unique value: data, methods, comparisons, or expert review.

Content Strategy in 2026: Topical Coverage, Originality, and First-Hand Evidence

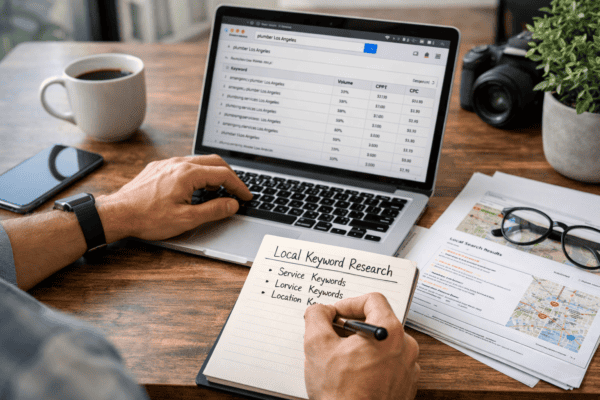

Content strategy in 2026 aims to make a site the best available source on a topic, not just a page that matches a keyword. Search systems can now extract answers, compare sources, and surface citations in richer results. That shifts the goal from “ranking a page” to building topical coverage with clear evidence and consistent quality.

Topical coverage means publishing a connected set of pages that map to a subject’s core concepts, subtopics, and common follow-up questions. Each page should have a single job: define terms, explain a process, compare options, or document a method. Internal links then show how each page supports the main topic, which helps both crawling and interpretation.

Originality matters because search engines can already summarise widely repeated information. Pages that add unique value tend to include first-hand data, direct observations, or analysis that is not available elsewhere. That can include original measurements, screenshots, code snippets, configuration details, or a clearly described testing method. When a claim depends on a source, cite the primary reference and link to it, such as Google’s guidance on helpful content.

- First-hand evidence: show what was done, what was observed, and what changed.

- Traceable sources: link to primary documentation, standards, or official announcements.

- Specificity: include exact settings, constraints, and edge cases, not broad statements.

These methods matter because AI-driven results reward pages that are easy to extract, verify, and cite. Clear structure (headings, definitions, steps, and tables) reduces ambiguity. Evidence reduces the risk of being treated as generic. Over time, strong topical coverage also improves internal discovery, refresh planning, and editorial consistency, which supports stable organic performance even as result layouts keep changing.

Technical SEO That Still Moves the Needle: Indexing, Performance, and Structured Data

Prioritise clean indexing, fast delivery, and machine-readable markup before chasing new formats. Start by validating crawl and index status in Google Search Console: review Indexing reports, inspect key URLs, and confirm canonical tags match the preferred version. Keep XML sitemaps current and return correct status codes (200, 301, 404) so Googlebot does not waste crawl budget.

Next, tighten performance using PageSpeed Insights and Lighthouse. Target Core Web Vitals thresholds: LCP under 2.5 seconds, INP under 200 ms, and CLS under 0.1 (Google). Reduce JavaScript, compress images, and use server-side caching to cut time to first byte.

Then add structured data with valid JSON-LD and test it in Rich Results Test. Use only schema types that match visible content, and monitor enhancements in Search Console. Watch out for blocked resources in robots.txt, inconsistent canonicals, and “spammy” markup that can trigger rich result loss.

Authority and Trust in 2026: Links, Mentions, and Brand Signals

Authority in 2026 means Google can verify that a site and its authors deserve to be cited, not just ranked. Links still matter, but Google also reads unlinked mentions, brand searches, and consistent entity data across the web as trust signals. A strong backlink profile works best when links come from relevant pages, use natural anchor text, and sit in editorial content rather than footers or templates.

These signals work through entity understanding. Google connects a brand to people, products, and topics using on-page cues (clear authorship, about pages, references) and off-page evidence (press coverage, industry citations, and profiles). Keep business details consistent across key listings and profiles, and link them back to a single canonical site identity.

Practical focus should shift from “link building” to reputation building. Earn citations in trade publications, publish research others can reference, and strengthen author credibility with verifiable bios and past work. Use Schema.org markup to clarify organisation, person, and article relationships, and monitor brand queries and referral sources in Google Search Console.

Measurement and Forecasting: GA4, Search Console, and SEO Testing in 2026

In 2026, measurement splits into two approaches: Option A tracks rankings and sessions as the main success metric. Option B measures search visibility, page-level outcomes, and tests changes before scaling.

Option A still helps with quick health checks, but it often misses what AI Overviews and richer results change: impressions can rise while clicks fall. Option B uses Google Analytics 4 (GA4) for outcomes, Google Search Console for search demand, and controlled SEO testing to prove impact.

| Area | Option A: report | Option B: test and forecast |

|---|---|---|

| Primary KPI | Rank position, organic sessions | Impressions, clicks, conversions, assisted revenue |

| Tool focus | Rank trackers, basic GA4 reports | Search Console queries + GA4 events and funnels |

| Change validation | Before/after comparisons | Split tests or holdouts; annotate releases |

| Forecasting | Traffic projections from rank gains | Query-level opportunity modelling and scenario ranges |

Key differences come down to attribution and confidence. GA4 event tracking ties organic visits to leads, sales, and micro-conversions. Search Console shows query demand and page-level click-through rate (CTR), which helps separate “visibility growth” from “traffic growth”.

- Forecast with ranges: model best/base/worst cases using Search Console impressions, current CTR, and expected CTR lift from changes.

- Test high-impact edits (titles, internal links, templates) with controlled rollouts to avoid false wins from seasonality.

- Keep a shared release log so GA4 and Search Console shifts map to specific changes.

Frequently Asked Questions

How have Google’s ranking signals changed between 2023 and 2026?

Between 2023 and 2026, Google has put more weight on content quality, originality, and usefulness, while reducing the impact of low-effort SEO tactics. Signals tied to page experience and trust still matter, but Google now evaluates intent match, topical depth, and spam patterns more strictly. Links remain a factor, yet manipulation is easier to detect.

What role do AI-generated answers and search overviews play in SEO strategy in 2026?

AI-generated answers and search overviews shift SEO towards being cited, not just clicked. Prioritise clear entity signals, accurate facts, and concise summaries that models can reuse. Use structured data where relevant, keep pages fast, and strengthen E-E-A-T with named authors and sources. Track visibility in overviews, not only rankings and clicks.

Which technical SEO factors still have the biggest impact on crawling and indexing in 2026?

In 2026, crawling and indexing still depend most on clean site architecture, correct robots.txt and meta robots rules, and a reliable XML sitemap. Fast, stable server responses reduce crawl waste and timeouts. Canonical tags and consistent internal links prevent duplicate URLs. Strong HTTP status handling (200, 301, 404, 410) keeps index coverage accurate.

How should content teams measure SEO success in 2026 beyond organic traffic?

Measure SEO success using outcomes and quality signals, not visits alone. Track organic conversions and revenue, lead quality (MQL-to-SQL rate), and assisted conversions. Monitor search visibility (impressions, average position, share of voice) and SERP features won. Use engagement and satisfaction signals such as dwell time, return visits, and branded search growth.

What link building practices still work in 2026 without increasing spam or penalty risk?

In 2026, low-risk link building focuses on earned, relevant mentions. Prioritise digital PR, original research, and expert commentary that attracts editorial links. Use partner and supplier pages only when the relationship is real. Reclaim unlinked brand mentions and fix broken backlinks. Avoid paid links, large-scale guest posting, and keyword-heavy anchor text.